Title: Harnessing active engagement in educational videos: Enhanced visuals and embedded questions

Authors: Greg Kestin and Kelly Miller

First author’s institution: Harvard University

Journal: Physical Review Physics Education Research, 18, 010148 (2022)

By complete coincidence in March 2020 and unaware of the profound shift in American education that was about to happen, we covered a study exploring the benefits of virtual physics demonstrations compared to live, in-person demonstrations. The authors claimed that students learned more from these virtual demos, often with a 25-30% improvement on tests of the knowledge covered in those videos compared to the students who watched the live demo. Furthermore, the students viewing the virtual demonstration didn’t seem to enjoy them any less than the students who saw the demos live. Yet, the authors couldn’t explain why the virtual demonstrations seemed to help students learn more.

Now, nearly 2 years later, the authors appear to have found their answer. A combination of visual cues and well time questions in the video seemed to do the trick. However, the authors found that both need to be present in order to see any benefits from watching a video of demonstration. One or the other by themselves makes little impact.

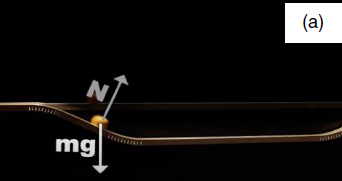

To reach that conclusion, the authors created a set of 4 videos for both of the demonstrations in the original paper. The first demo is the “shoot the monkey” demo where the instructor aims a cannon at a stuffed monkey and hits it midair, demonstrating the independence of the components of motion. In the second video, the instructor rolls two balls along two tracks, a shorter flat track and a longer dipped track. Despite having to travel a longer distance, the ball that follows the dipped track wins the race. Screenshots of these demos are shown in Figures 1 and 2.

For each of the demos, the authors created one video that included graphic overlays to explain the physics as they happened and frequent questions the viewer had to answer, one video that only included the graphic overlays, one video that only included the questions, and one video that included neither, serving as the control. In all cases, the videos had the same narration.

The authors expected that the graphic overlays would help highlight the important ideas students were supposed to get from the demonstrations, which prior work has demonstrated is important. Likewise, because active engagement has been shown to be better for learning compared to passively absorbing information, the questions were designed to prime viewers for what was going to happen next in the video. That is, the questions were about future material rather than what had already happened in the video.

The authors then recruited 300 “workers” from Amazon’s Mechanical Turk platform to watch one of the versions of the videos. One of the author’s previous research found that crowdsourcing data was an effective substitute for “real” students in the course.

Each worker would complete a short pre-test about the concepts in the demonstration video, watch the video, and then complete a post-test with similar questions as the pre-test contained about what they learned. All participants answered the same questions, regardless of which video they watched.

Because the results were similar across both demonstrations, the authors reported their results in aggregate.

The authors found that the when the demonstration videos contained both the visuals and the questions, the workers did best on the post test (Figure 3). However, if only one was present, their scores were similar to the performance of the workers in the control group.

The authors also looked at the pre-test scores and the reported level of physics experience of the workers to ensure that it didn’t affect the results, which it didn’t. The pre-test scores were more or less similar across conditions as were the levels of physics experience, with nearly 4 out of 5 workers reporting either no physics experience or only high school experience (similar to what would be expected in a college introductory physics course).

Finally, the authors conducted a linear regression analysis on the post-test scores to see how the various components of the videos influenced the result. They found that, aligned with the post-test score results, graphic overlays only offered a benefit when the in-video questions were also there. Moreover, they found that performance on the in-video questions was not associated with post-test score, suggesting that the questions were doing their job of priming the viewers for the upcoming content rather than serving as a learning check.

Overall, the authors concluded that graphic overlays describing the physics along with regular questions to prime the viewer for what is coming up in the video are key reasons that video demonstrations seem to result in better learning and that they must be used together to get the intended effect. The authors did note additional tests should be conducted to ensure that the results generalize to both students enrolled in a physics course and to other concepts in physics. Perhaps there is something special about classical mechanics that lends itself well to these type of video demonstrations that wouldn’t generalize to other topics. Only time will tell.

Figures used under CC BY 4.0. Header image is based on Figure 6 in the paper.

I am a postdoc in education data science at the University of Michigan and the founder of PERbites. I’m interested in applying data science techniques to analyze educational datasets and improve higher education for all students